It is hard to imagine, but some people actually thought it was perfectly reasonable (and Constitutional) for the government to dictate to business what they should charge for their products and dictate to workers what their time was worth. I was working at J.C. Penney in Sacramento and going to grad school at night, but I took time out to send “The Case against Wage and Price Controls” to National Review in July. It became the cover story on September 24, and Bill Buckley later hired me over lunch in San Francisco. If anyone is interested, it’s available at SCRIBD here.

Cato at Liberty

Cato at Liberty

Email Signup

Sign up to have blog posts delivered straight to your inbox!

Topics

The Unhappy 40th Anniversary of Nixon’s Wage and Price Controls

Forty years ago today, President Richard Nixon shocked the country and the world, not with an escalation of the Vietnam War or a political scandal, but with an edict on the economy that reverberates to this day.

In a surprise televised speech on Sunday evening, August 15, 1971, the president announced that he would immediately impose wage and price controls, slap a 10 percent duty on imports, and suspend the international convertibility of the U.S. dollar into gold. All were to be temporary measures, of course, to promote jobs, dampen inflation, and combat “international money speculators” betting against the dollar. (You can read the entire speech here.)

What came to be known in the international finance world as the Nixon Shock is worth remembering four decades later as a warning against the abuse of executive power over the economy. Nixon’s intervention failed to boost the economy in a sustainable way while causing real damage that took years to correct.

The centerpiece of the Nixon Shock was its controls on prices. In a market economy, freely fluctuating prices are the nervous system that coordinates supply and demand. Yet in one of the more chilling statements delivered by a U.S. president, Nixon told the nation that evening,

I am today ordering a freeze on all prices and wages throughout the United States for a period of 90 days.

The price controls did tame inflation temporarily, but it came roaring back within three years to double-digit levels and persisted through the 1970s because of loose monetary policy. A tight lid on a boiling tea pot can only contain the steam for a time before it explodes.

The controls continued on gasoline, causing artificial shortages (as price controls usually do) symbolized by gas lines during the 1970s. Only when President Reagan finally lifted the controls on oil and gasoline in 1981 did the specter of short supplies finally disappear. (The 10 percent import surcharge did prove to be temporary, lasting only until the end of 1971.)

Closing the gold window was arguably inevitable given the lack of monetary discipline by the U.S. central bank. By 1976, the dollar and other major currencies were floating freely, which has turned out to work rather well, as Milton Friedman predicted it would. It also turned out that pressure on the dollar to depreciate was not driven by speculators after all but by the surplus of dollars that had been created to finance the Vietnam War and the Great Society.

One lesson of the Nixon shock is that if politicians are granted “emergency powers” they will tend to abuse them in situations that were never envisioned when the powers were originally granted. A second lesson is that “temporary” measures have a habit of becoming permanent. The big lesson is that the power of politicians over the economy should be limited. Any request for temporary emergency powers should be greeted with the deepest skepticism.

Colorado Court Halts School Voucher Program

Last Friday, a Colorado District Court halted the new and unique Douglas County school voucher program with a permanent injunction. School choice legislation is a little like the Field of Dreams: pass it, and they will sue–and we all know who “they” are. So there’s a tendency to dismiss legal setbacks for the choice movement as purely the result of self-serving monopolists exploiting bad laws or partisan, activist judges. There are certainly cases that fall into that category, but this Colorado ruling isn’t one of them.

Oh, the self-serving monopolists and opponents of educational freedom are no doubt cheering it, but the ruling does not read like the work of a rube or an ideologue, and not all of the state constitutional provisions on which it was based can be dismissed as outdated examples of religious bigotry. The state’s “compelled support” clause, in particular, seems to uphold a fundamentally American idea: that it is wrong to coerce people to pay for the propagation of ideas that they disbelieve. Thomas Jefferson, in his Virginia Declaration of Religious Freedom, called this: “tyranny.”

Obviously, conventional public schools have been a source of such coercion for a very long time–everyone has to pay for the public schools, despite profound objections they may have to the way those schools teach history, literature, government, biology, or sex education. That’s why we’ve had “school wars” as long as we’ve had government schools. And obviously vouchers offer the advantage of giving parents a much wider range of educational options for their children than do the one-size-fits few public schools. But despite this advantage, vouchers require all taxpayers to fund every kind of schooling, including types of instruction that might violate some taxpayers’ most deeply held convictions. That’s a recipe for continued social conflict over what is taught.

If there were no alternative to vouchers for providing school choice, perhaps it would make sense to have a debate over which freedoms should take precedence: the freedom of choice of families or the freedom of conscience of taxpayers–and then to sacrifice whichever one was deemed less worthy. But there is an alternative, and it does not require anyone to be compelled to support any particular type of instruction. I discuss this alternative, education tax credits, in a recent Huffington Post op-ed.

Related Tags

Welcoming a New Common Noun: ‘the Mubarak’

Officials in London are looking everywhere but the mirror for places to affix blame for the recent riots. Beyond the immediate-term answer, individual rioters themselves, the target of choice seems to be “social media.” Prime Minister David Cameron is considering banning Facebook, Twitter, and Blackberry Messenger to disable people from organizing themselves or reporting the locations and activity of the police.

Nevermind substantive grievance. Nevermind speech rights. We’ve got scapegoats to find!

[Events like this are nothing but a vessel into which analysts pour their ideological preconceptions, so here’s a sip of mine: Just like a spoiled child doesn’t grow up to be a gracious and kind adult, a population sugar-fed on entitlements doesn’t become a meek and thankful underclass. Also: people don’t like it when the police kill unarmed citizens. Which brings us to some domestic U.S. ineptitude…]

Two-and-a-half years ago, a (San Francisco) Bay Area Rapid Transit (BART) police officer shot and killed an unarmed man on a station platform in full view of a train full of riders (video). Sentenced to just two years for involuntary manslaughter, he was paroled in June. This week, upon learning of planned protests of the killing that may have disrupted service, BART officials cut off cell phone service in select stations, hoping to thwart the demonstrators.

[Update: A correspondent notes that the BART protest was in relation to another, more recent killing.]

The Electronic Frontier Foundation rightly criticized the tactic in a post called “BART Pulls a Mubarak in San Francisco.” It’s the same technique that deposed Eqyptian dictator Hosni Mubarak used to try to prevent the uprising that toppled him.

What’s true in Egypt is true in the U.K. is true in the United States. People will use the new communications infrastructures—cell phone networks, social media platforms, and such—to express grievance and to organize.

Western government officials may think that our lands are an idyll compared to the exotic savagery of the Middle East. In fact, we have people being killed by inept law enforcement in the U.S. and the U.K. just like they have people being killed by government thugs in the Middle East. What seems like a difference in kind is a difference in degree—and it’s no difference at all to the dead.

Among the prescriptions that flow from the London riots and BART’s communications censorship are the intense need for greater professionalism and reform of police practices. Wrongful killings precipitate (rightful) protest and (wrongful) violence and looting. Public policies in the area of entitlements and immigration that deny people a stake in their societies need a serious reassessment.

But we also need to keep in mind the propensity of government officials—in all governments—to seek control of communications infrastructure when it serves their goals. From the perspective of the free-speaking citizen, centralization of communications infrastructure is a key weakness. It gives fearful government authorities a place to go when they want to attack the public’s ability to organize and speak.

The Internet itself is a distributed, packet-switched network that generally resists censorship and manipulation. Internet service, however, is relatively centralized, with a small number of providers giving most Americans the bulk of their access. In the name of “net neutrality,” the U.S. government is working to bring Internet service providers under a regulatory umbrella that it could later use for censorship or protest suppression. Platforms like Facebook and Twitter are also relatively centralized. It is an important security to have many of them, and to have them insulated from government control. The best insulation is full decentralization, which is why I’m interested in the work of the Freedom Box Foundation and open source social networks like Diaspora.

The history of communications freedom is still being written. Here’s to hoping that “a Mubarak” is always a failure to control people through their access to media.

Related Tags

The Hayek Surge Continues

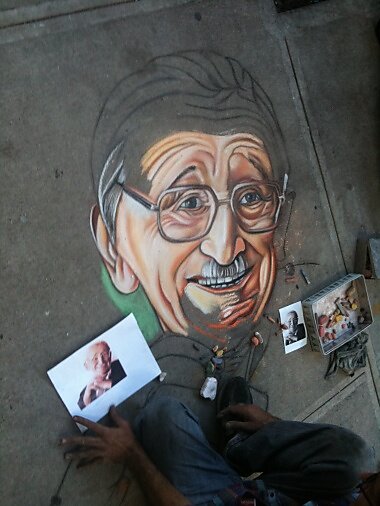

Seen in New York City — not near NYU, with its longstanding program in Austrian economics, but uptown near Columbia University, at 112th Street and Broadway — a sidewalk portrait of F. A. Hayek:

Hat tip: ThinkMarkets. For more on the reviving interest in Hayek, see here and here and here.

Related Tags

Give Credit Where Credit is Due

“The central bank has to be, in a way, a neutral player, and yet we find ourselves trying to stimulate, and the effect is further leveraging,” he said. “If I thought zero rates would bring jobs, I’d want it forever. But it distorts the economy.”

He continued, “In 2003, when we lowered rates and kept them there because unemployment was 6.5 percent — look at the consequences.” Those consequences included the nation’s mortgage feast, followed by its current economic famine.

Returning home, I read this comment from Bill Woolsey on David Beckworth’s blog:

I am a bit of an ABCT skeptic, but I am more and more concerned that using a commitment to keep interest rates low in the future is the most likely way to generate malinvestment. In my view, the way to avoid malinvestment despite errors in monetary policy is for people to understand that future short term rates will reflect future conditions. An investment project that is only profitable if short term rates are maintained into the future, is an error. And, by the way, having the Fed purchase long term bonds to directly lower long term rates has a similar problem.

Although I don’t call myself an Austrian economist, and am more than happy find fault with arguments by self-styled “Austrians” that I think unsound, I can’t help feeling that Hayek deserves a lot more credit than he’s getting for having put forward a theory which, whatever its general merits may be, seems to fit the recent boom-bust experience so well. So far as I’m aware, Mr. Hoenig never mentions Hayek, and may not even be aware of the overlap between his own thinking and Hayek’s theory. Bill Woolsey, on the other hand, knows about the ABCT, sees the fit to recent experience, but remains a “skeptic.” I wonder whether he is merely indicating his disagreement with those more fervent proponents of the theory who seem to insist that it is the only valid theory of cycles.

In any event it seems to me that anyone who believes that the recent bust is to some important extent a consequence of past malinvestment that was sponsored by easy monetary policy ought to acknowledge the fact that F.A. Hayek spent much of his early career warning against this very possibility, and later won a Nobel prize for the work in question. That something akin to his theory, if not the very thing itself, is now subscribed to by many non-Austrians, either with no mention of Hayek’s contribution or with somewhat grudging acknowledgment of it only, seems to me both strange and unfair.

Related Tags

Is Obama Worse Than Carter and Bush?

Conservatives have become so furious with President Obama that they forget just how bad some of his predecessors were. One Jeffrey Kuhner, whose over-the-top op-eds in the Washington Times belie the sober and judicious conservatism you might expect from the president of the “Edmund Burke Institute,” writes most recently:

A possible Great Depression haunts the land. Primarily one man is to blame: President Obama.

Mr. Obama has racked up more than $4 trillion in debt.

Yes, he has. And that’s almost as much as the $5 trillion in debt rung up by his predecessor, George W. Bush. True, on an annual basis Obama is leaving Bush in the dust. But acceleration has been the name of the game: In 190 years, 39 presidents racked up a trillion dollars in debt. The next three presidents ran the debt up to about $5.73 trillion. Then Bush 43 almost doubled the total public debt, to $10.7 trillion, in eight years. And now the 44th president has added almost $4 trillion in two years and seven months. (Here’s an online video depicting each president’s debt accumulation as driving speed.) So Obama is winning the debt war, but it’s not like he caused the debt crisis or the unemployment crisis all by himself.

And then, trying to prove that Obama is even worse than Jimmy Carter — even worse than Jimmy Carter! — Kuhner makes this curious claim:

Most importantly, Mr. Carter had respect for the dignity and integrity of the presidency. He never trashed his opponents the way Mr. Obama does.

Really? Maybe Mr. Kuhner is too young to remember Carter, and didn’t bother to check his claim, or maybe he just got carried away. But I can remember October 1980, when President Carter repeatedly said that the election of Ronald Reagan would be “a catastrophe” that would mean an America

separated, black from white, Jew from Christian, North from South, rural from urban.

Liberal columnist Anthony Lewis asked in the New York Times, “Has there ever been a campaign as vacuous, as negative, as whiny? Probably so — somewhere back in the mists of the American Presidency. But it would take a good deal of research to come up with anything like Jimmy Carter’s performance in the campaign of 1980.” The venerable Hugh Sidey wrote in Time magazine, “The wrath that escapes Carter’s lips about racism and hatred when he prays and poses as the epitome of Christian charity leads even his supporters to protest his meanness.”

Obama is a big spender who portrays himself as a “beyond left and right” above-the-fray president trying to work with everyone while demonizing his opponents. But let’s not forget the meanness of Jimmy Carter and the spendthrift record of George W. Bush in seeking to establish Obama’s uniqueness.