“Massive jolts of New Deal spending had stopped the economic slide, [but the economy crashed again when] over two years, FDR slashed government spending 17 percent.” (From a 2011 NPR presentation.)

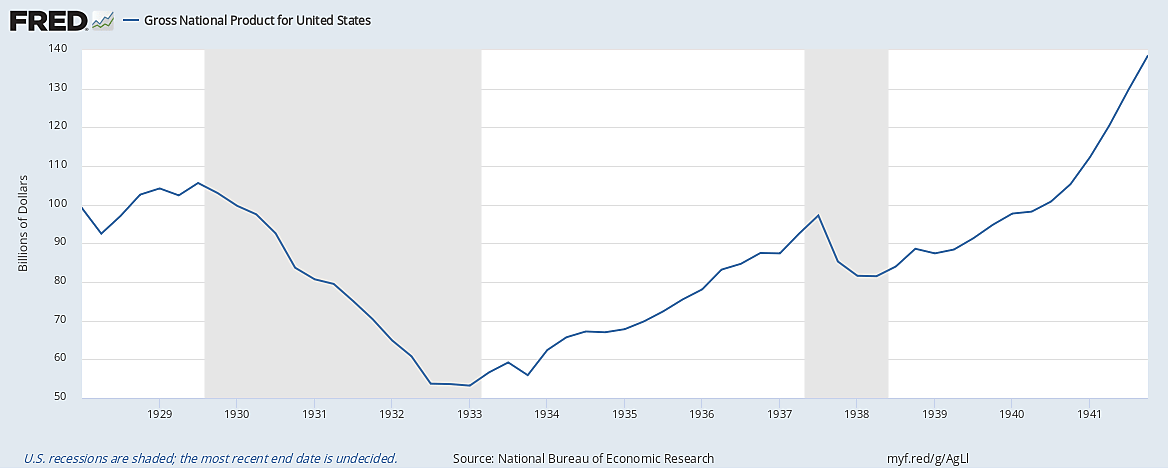

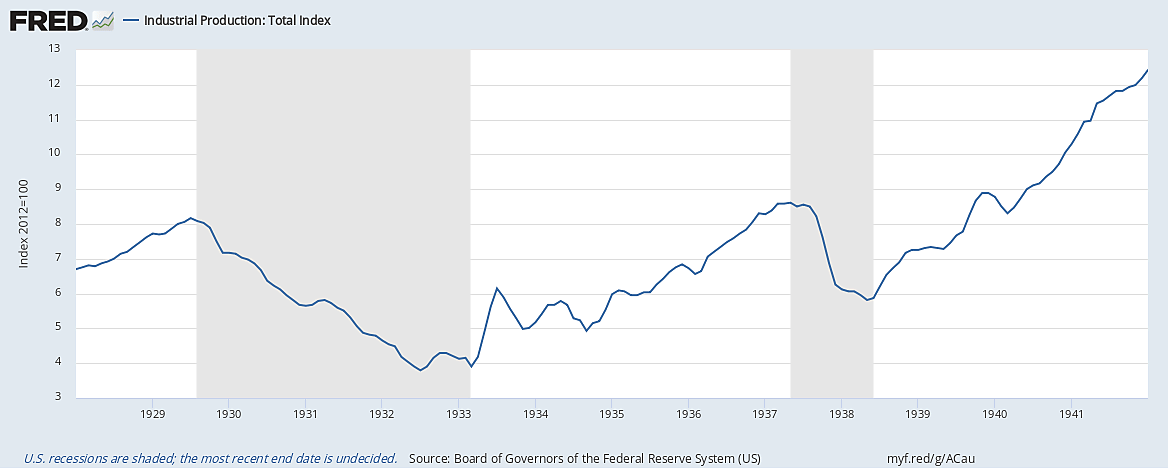

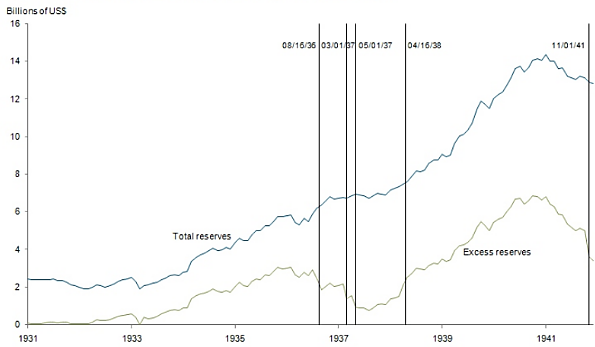

In the last installment of this series, I discussed the hypothesis that the 1937 collapse resulted from an ill-conceived tightening of monetary policy to which both the Fed and the Treasury contributed.

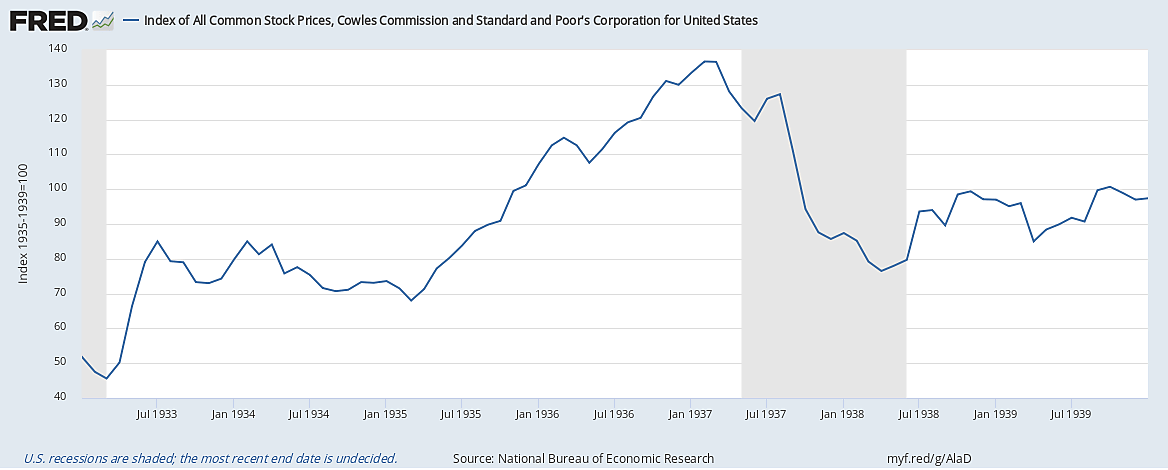

While authorities differ in the degree of responsibility they assign to each, there’s widespread agreement that, between them, instead of merely extinguishing a boom, as they intended to do, both Fed and Treasury officials helped bring about a crash that undid much of the post-1933 recovery.

But monetary explanations are far from the only ones that have been offered for the 1937 downturn. As I’ve said, there seems to be no end of potential culprits in that calamity. Here I consider some others, starting with the claim that the downturn was the result, not of monetary developments, but of a return to fiscal austerity.

Austerity, New Deal Style

Worries about over-speculation and looming inflation that led the Fed and the Treasury in 1936 to take up the cudgels against overly-easy monetary policy had their fiscal counterpart in the fear that the federal debt was reaching unsustainable levels. The result was a renewed attempt to clamp-down on deficits by reining-in federal expenditures and collecting new taxes.

Although Treasury Secretary Henry Morganthau was this effort’s prime mover, FDR was solidly behind it. “People complain to me,” the president told a gathering at New York’s Democratic Club that April:

about the current costs of rebuilding America, about the burden on future generations. I tell them that whereas the deficit, of the federal government this year is about $3,000,000,000, the national income of the people of the United States has risen from $35,000,000,000 in the year 1932 to $65,000,000,000 in the year 1936, and I tell them further that the only burden we need to fear is the burden our children would have to bear if we failed to take these measures today.

In fact the difference between fiscal 1936 receipts and revenues turned out to be almost twice Roosevelt’s $3,000,000,000 estimate, making for the largest peacetime deficit in the nation’s history, which, together with deficits run in each of the preceding five years, would raise the total federal debt to $34.5 billion.

So the government tightened the reins. Between September 1936 and October 1937, cuts reduced government spending by a quarter of a billion inflation-adjusted dollars, while an increased tax burden, due mainly to the Social Security tax first collected in January 1937, but also to the undistributed profits tax introduced in mid-1936, reduced private earnings. The Federal government’s net contribution to total earnings fell accordingly.

Fed officials were, not surprisingly, among those who tried to pin the blame for the ’37 downturn on the government’s decision to turn off its fiscal tap. Marriner Eccles, for example, attributed it to a “too rapid withdrawal of the government’s stimulus.” Eccles drew on a report on “Causes of the Recession” prepared by economist Lauchlin Currie, his personal assistant. “From 1934 to 1936,” Currie wrote, “the largest single factor in the steady recovery movement was the excess of Federal activity-creating expenditures over activity-decreasing receipts.” During 1936, those net outlays averaged to $335 million per month. In contrast, from September through March 1937, the average was just $60 million a month. From this and other evidence, and after briefly examining other possible explanations, Currie concluded that the “withdrawal of the Government’s contribution” to overall spending was to blame for the recession.

Fiscal Policy: Mole Hill or Mountain?

The claim that, by withdrawing most of its fiscal stimulus, the government slowed economic activity, seems beyond dispute. But Currie’s further claim that fiscal retrenchment alone accounts for the severe 1937 downturn won’t stand close scrutiny. For one thing, by comparing net government outlays between March and September 1937 to those for 1936, he exaggerates the extent of the retrenchment. Thanks to the veteran’s Bonus Act passed (over FDR’s veto) in 1936, which ultimately added $1.7 billion to government expenditures, the government’s net contribution for 1936 was an exceptionally high $4.1 billion, as compared to $3.2 billion and $3.1 billion for 1934 and 1935, respectively. The period from March to September 1937, in contrast, was a low water mark for net government expenditures: the government’s monthly contribution was still $264 million that January, and by mid-1938 it had bounced back to about $300 million per month—a value exceeding the averages for 1934 and 1935, and only slightly below the exceptionally high monthly average of $335 million for 1936.

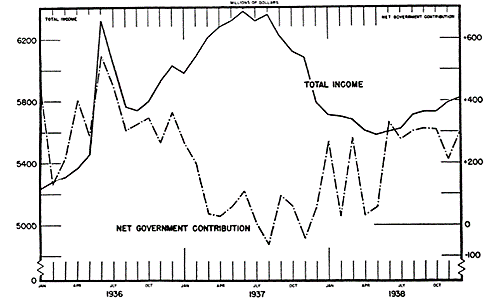

Even so, the cutback was substantial. But timing is another problem for Currie’s thesis. As Kenneth Roose, who was then teaching at UCLA, observed in a 1951 article, and as the chart reproduced from it below shows, there’s a lag of more than six months between the beginning of the start of the decline in net government spending in December 1936 and the start of the decline in total income, and it wasn’t until January 1938 that monthly income fell below its high 1936 average. Yet a large proportion of government spending in the 1930s, including relief payments, became income with no lag at all; and contemporary estimates put the income lag for government payments of all sorts at just one month.

But the biggest problem with Currie’s thesis is his assumption that until 1937 “the largest single factor in the steady recovery movement was the excess of Federal activity-creating expenditures over activity-decreasing receipts.” That assumption turns out to be crucial, for if expansionary fiscal policy wasn’t an important driver of the pre-1937 recovery, so long as the government’s contribution remained positive, a decline in that contribution, however substantial, couldn’t possibly have been the main, let alone the sole, cause of the 1937 crisis.

In fact, as I pointed out in an earlier part of this series, fiscal policy didn’t contribute much to the 1933–37 recovery. To recall E. Cary Brown’s summing-up of his seminal findings, since confirmed by Christina Romer and others, far from driving that recovery, as a means for achieving recovery fiscal stimulus simply “wasn’t tried”: although the federal government’s net contribution to spending was certainly lower in 1937 than it had been in 1934 or 1935, it was no greater in those years than it had been in 1929! 1936 alone witnessed an exceptional fiscal contribution, thanks to the veteran’s bonus; but that bonus, which only began mid-way through the year, at best accounts for a small part only of the overall increase in income between April 1933 and May 1937. So while the post-1936 decline in net government spending probably contributed to the Roosevelt Recession, it can hardly be held solely responsible for it.

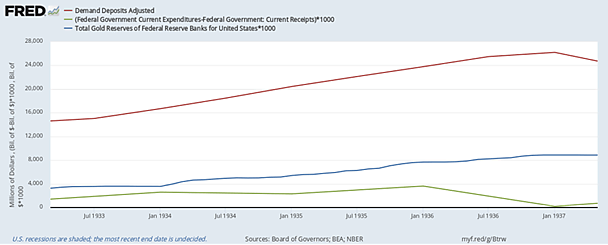

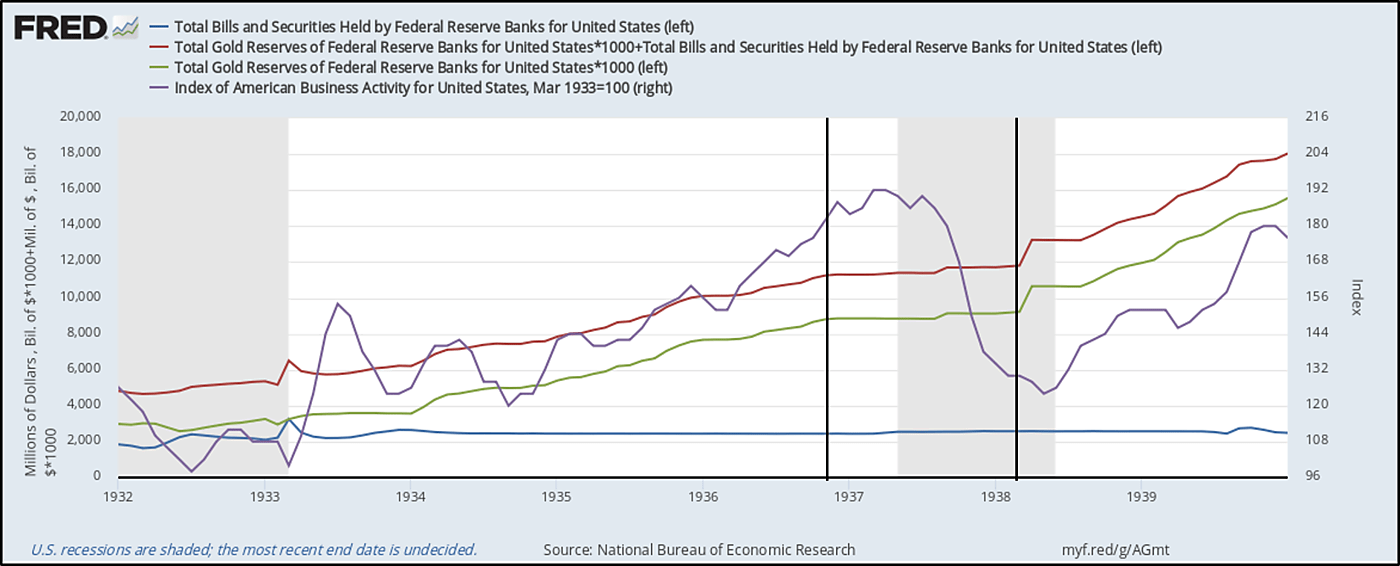

Finally, Currie’s attempt to deny that monetary policy contributed to the downturn is itself unsatisfactory. He notes that, from their nadir at the start of the bank holiday, demand (“checkable”) deposits grew by some $10 billion by the middle of 1936, and that this growth went hand-in-hand with growth in spending on consumer durables. So far so good. But Currie then claims that the build-up in deposits can only have been due to the government’s deficit spending; and that claim is neither logically sound nor consistent with the evidence. As I explained in yet another post for this series, and as the next chart shows, by adding to the nation’s gold reserves (blue line), gold imports contributed a lot more to demand deposits (red) than net government spending (green) did.

Parceling Out Blame

In a 2015 study Jonian Rafti, then a senior at Bard College, tried to weight the respective fiscal and monetary contributions to the ’37 crash econometrically. He concluded that each was responsible for about half of the overall decline in income. But because he assumed a fiscal multiplier of 1.4, meaning that every $1 reduction in net government spending reduces total income by that amount plus 40 cents, Rafti’s estimate of the fiscal contribution is probably on the high side of the truth. At the other extreme, Romer, using statistical evidence of the effects of the 1936 veteran’s bonus, found a fiscal multiplier of just .233 (in contrast to her monetary multiplier estimate of 0.823), implying a much smaller fiscal contribution. Most other estimates of the government spending multiplier place it somewhere between Rafti’s and Romer’s estimates. So it’s likely that the cutback in net government spending was to blame for less, if not much less, than half of the ’37 downturn.

To be clear: this conclusion does not mean that a sufficiently expansionary fiscal policy couldn’t have prevented the Roosevelt Recession. However small the fiscal multiplier, a big enough boost in net government spending might in principle have made up for any decline in spending of other sorts. But saying this isn’t the same as saying that the recession was mainly brought about by the Roosevelt administration’s cutbacks and new taxes.

Striking it Poor

Because serious recessions generally involve correspondingly severe reductions in overall spending, it’s not surprising that economists seeking the cause of the ’37 collapse should seek its cause in either monetary or fiscal tightening. But adverse supply shocks can also cause, or at least aggravate, downturns, as happened when OPEC restricted its oil exports starting in 1973, and when the coronavirus struck last year. According to some experts, it happened in 1937 as well; and here again, the New Deal had something to do with it.

Several developments sent shock waves through the U.S. economy in 1937, striking the automobile industry especially hard. One was an increase in the cost of labor and raw materials. Thanks mainly to European rearmament, the materials needed to make a small car, which would have cost G.M., Ford, or Chrysler less than ninety dollars in the summer of 1936, cost almost 106 dollars a year later; and thanks mainly to more aggressive union tactics, labor costs rose even more.

Quite apart from their role in securing higher wage rates, some of those union tactics were themselves “shocking.” They took the form of numerous strikes, including many production-paralyzing “sit-down” strikes. The BLS recorded 4740 strikes in all for 1937—an all-time record, and more than twice the number in 1936. These led to the loss of almost 28.5 million man-hours of work, itself a record.

Sit-down strikes, the main cause of lost work hours, had been rare before the depression, and became common only after members of the United Auto Workers took over two Flint, Michigan auto body plants on December 30, 1936. Between then and late February 1939, when the Supreme Court decided (in National Labor Relations Board (NLRB) v. Fansteel Metallurgical Corporation) that the NLRB lacked authority to order employers to reinstate workers fired after sit-down strikes) there were some 600 such strikes, thanks to which the automobile industry alone ultimately ended up losing about 4.5 million man-hours.

Strikes and the Wagner Act

The burst of strikes, and of sit-down strikes especially, is often attributed to the July 1935 passage of the National Labor Relations Act (NLRA), better known as the Wagner Act (after New York Senator Robert Wagner, who sponsored it). Harold Cole and Lee Ohanian, for example, claim that, by giving “even more bargaining power to workers than the NIRA,” the Wagner Act led to a significant increase in strike activity “particularly after the Supreme Court upheld its constitutionality in 1937.”

But the Wagner Act’s bearing on strike activity wasn’t so straightforward. For one thing, the timing is off: although FDR signed the act on July 5th, 1935, the total number of strikes, and the number of major sit-down strikes, didn’t rise considerably until the last weeks of 1936. Nor does Cole and Ohanian’s claim that the number of strikes rose “after the Supreme Court upheld [the NLRB’s] constitutionality in 1937” wash: in fact that number reached its zenith in March 1937, a month before the Supreme Court rendered its decision.

And while it’s certainly true that the NLRA strengthened workers’ collective-bargaining rights, it and other “little Wagner Acts” passed by state governments also established boards to adjudicate disputes over those rights. The whole point of those boards was to make it unnecessary for unions to resort to militant tactics. Consequently, as John Spielmans observes in a 1941 JPE article, as tempting as it may be to connect the strike wave to the New Deal’s “promise…to lend support to labor in its struggle,” treating that wave as “as proof of the unhappy effects of the Wagner Act” would be “rash.”

The more subtle truth is that the Wagner Act encouraged strikes, not by granting workers more rights, but by failing to adequately clarify those rights. By upholding workers’ right to organize under “majority rule,” it sought to rule-out employer-dominated “company” unions. But it left federal law regarding the legality of “closed shops,” meaning unions that compelled all workers to join them and take-part in their tactics, unsettled. The National Labor Relations Board was therefore in no position to adjudicate disagreements between unions seeking to establish closed shops and employers bent on stopping them. Frustrated by what they considered the Wagner Act’s failure to deliver on its promises, and encouraged by FDR’s reelection in November 1936, union organizers decided to force the issue. The Flint strike was their big gamble; and that strike’s surprising success quickly led to hundreds of others like it.[1]

A Cost-Push Recession?

Between them, strikes, however encouraged, and the Wagner Act’s general strengthening of workers’ bargaining power, boosted firms’ labor costs. Benjamin Anderson considered this “[a] main factor on the industrial side in bringing the revival of 1935–1937 to a close.” So did Kenneth Roose. “In a free enterprise economy,” Roose observed in a 1948 article, “given sufficiently low profits, an increase in costs…can lead to an almost complete cessation in investment.” And that, he said, was what happened when input costs, “of which labor costs were the most important,” began rising during the first quarter of 1937. Cole and Ohanian also claim that union activity “significantly reduced firm profitability” both by temporarily shutting-down plants and by boosting the cost of labor.

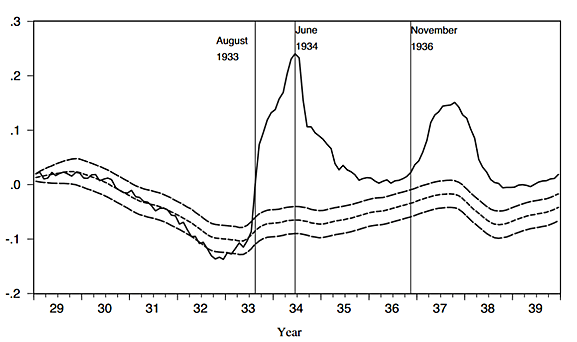

Could increased union activity really have boosted labor costs enough to throttle business investment? According to Chris Hanes, that seems to have been the case. In a paper published in the December, 2020 Journal of Economic History, he estimates what he calls “projected average hourly earnings (AHE) inflation rates” for 1929–1939 using coefficients from a regression of AHE inflation for 1891–1914 and 1955–67 on the current and lagged unemployment rate, plus a dummy variable for the pre-WWI years. He also does the same thing using industrial production instead of unemployment, with almost identical results. Comparing his projected values with actual depression-era AHE inflation, he finds that the actual series rose well above the projections in 1933–1935 and again in 1937–1938. A chart from Hanes’ paper comparing the two series, with error bands surrounding his AHE projections, suggests a kneeling Bactrian camel, complete with GI tract:

Hanes goes on to consider possible explanations for the camel’s humps, ruling them all out save for New Deal labor policies. He finds that the first hump coincides with the dates of the NRA codes, and that the second coincides precisely “with a wave of strikes associated with unionization supported by New Deal labor policies.” Employers, Hanes says, “raised wages and overtime premiums as they entered into agreements with unions or attempted to forestall union threats.” And those wage hikes “were large,” especially compared to firms’ still-depressed profits. Hanes shows as well that a regression of his estimated AHE anomaly against the number of manufacturing workers involved in strikes and a small set of control variables fits the data extremely well, reproducing “not only the rise of anomalous [wage] inflation in 1933 but also the diminution of anomalous inflation in 1936 and the second round of anomalous inflation in late 1936–1937.”

In a somewhat earlier, complementary study, Joshua Hauseman shows that the real consequences of labor supply shocks, and shocks to the auto industry especially, were also large. Automobile production, he notes, made up a substantially larger share (3.5 percent) of U.S. GDP in 1937 than it does today. Most car production inputs were also supplied domestically back then. Using a statistical model that fits pre-1937 auto sales to fiscal, monetary, and other economic determinants, Hauseman finds that, had it not been for adverse auto-industry supply shocks, the Roosevelt-Recession might only have been two-thirds as deep as it was in fact.

***

In “Murder on the Orient Express,” Hercule Poirot discovers that, except for the victim, the passengers on that train are all members or servants of the same family; and that, while some played bigger parts in the murder than the rest, all took part in it. As for what killed the 1933–37 economic recovery, one might say much the same thing: all of the suspect policies were guilty to at least some extent; and all were kith and kin of the New Deal.

Continue Reading The New Deal and Recovery:

- Intro

- Part 1: The Record

- Part 2: Inventing the New Deal

- Part 3: The Fiscal Stimulus Myth

- Part 4: FDR’s Fed

- Part 5: The Banking Crises

- Part 6: The National Banking Holiday

- Part 7: FDR and Gold

- Part 8: The NRA

- Part 8 (Supplement): The Brookings Report

- Part 9: The AAA

- Part 10: The Roosevelt Recession

- Part 11: The Roosevelt Recession, Continued

_______________________

[1] The Wagner Act ultimately failed to secure not only unions’ right to establish closed shops, but their right to resort to sit-down strikes for any purpose. Although, with its April 12, 1937 NLRB v. Jones & Laughlin Steel Corp. decision, the Supreme Court declared the NLRA itself constitutional, not quite two years later, in NLRB v. Fansteel Metallurgical Corp., it held that the NLRA lacked the authority to compel employers to reinstate workers fired for taking part in sit-down strikes. Finally, the NLRA’s lack of clarity regarding the legality of closed shops was corrected by the 1947 Taft-Hartley Act, which made them illegal, while also prohibiting mass picketing, “wildcat” (or “quickie”) strikes, and various other former union tactics.